I’m by no means the first person to write about the dangers of rewriting code. The definitive work for me is “Things You Should Never Do, Part I” written by Joel Spolsky in 2000. If you haven’t read that, you should.

A recent discussion caused me to create a series of charts to graphically illustrate the dangers of rewriting. I tend to think graphically – blame too much time in Powerpoint creating VC pitch decks.

I hope these charts help anyone considering rewriting code or being hectored by earnest, bright, young engineers and architects advocating a rewrite. I’ve seen this movie before and I know how it ends.

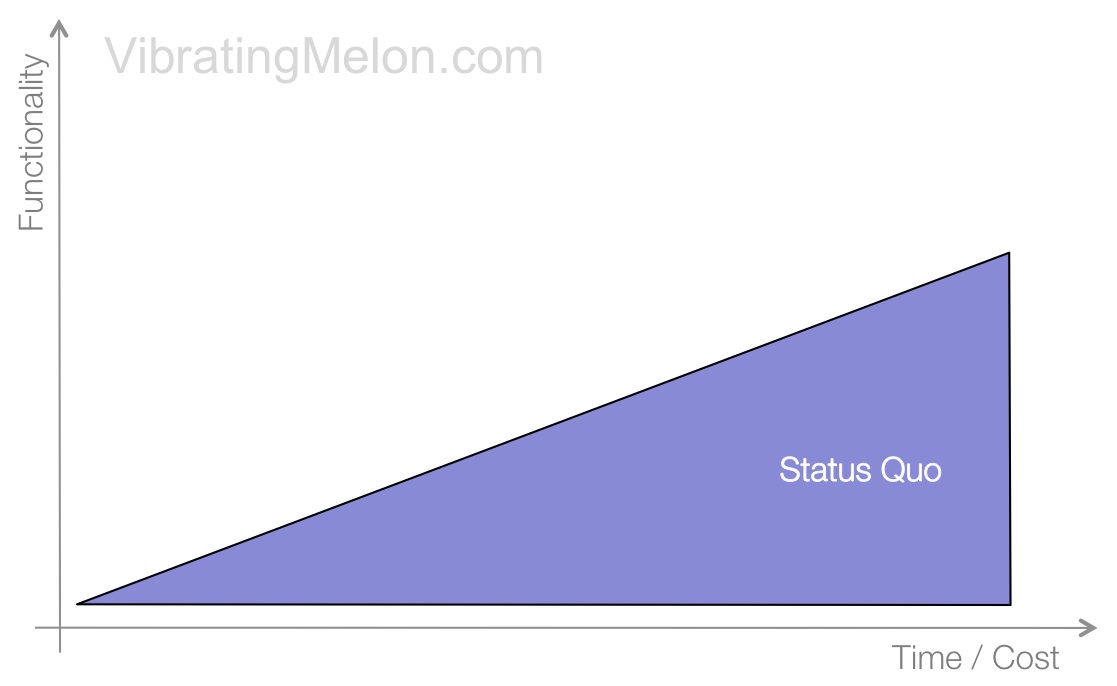

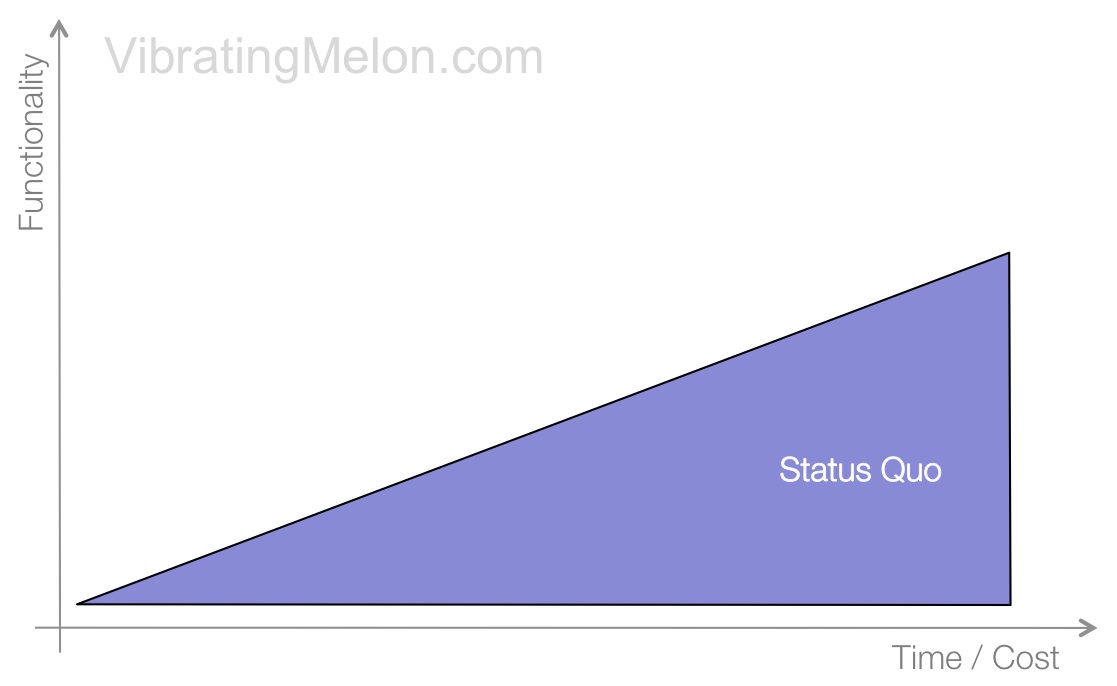

The Status Quo

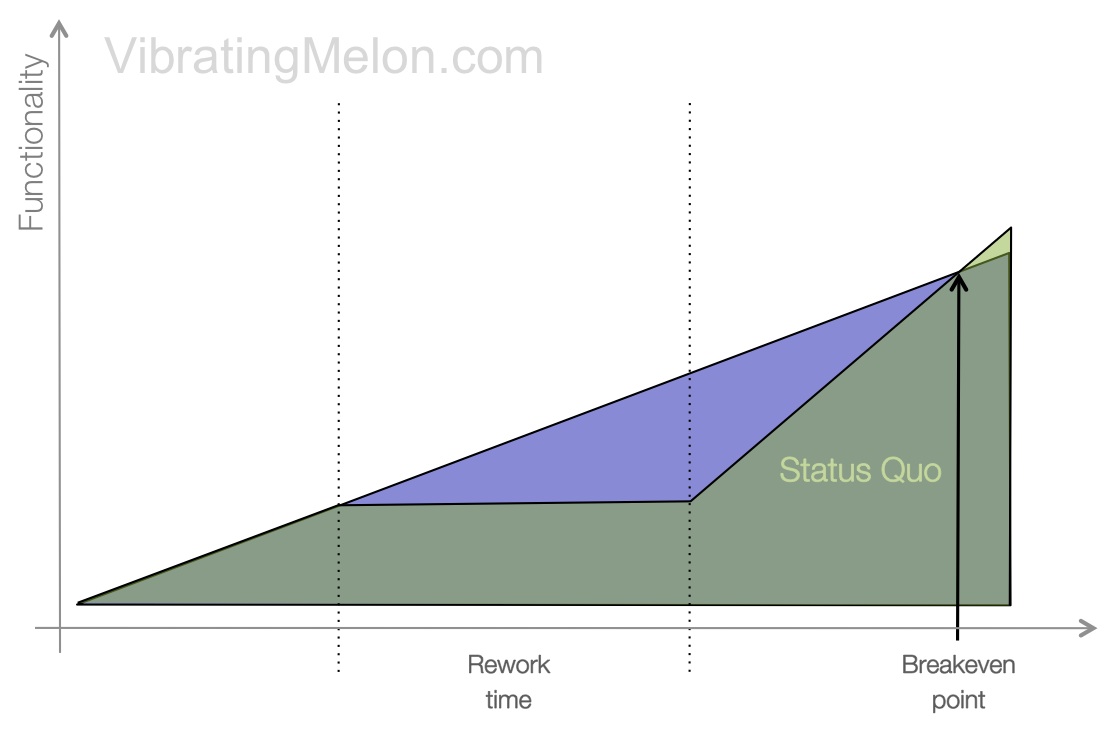

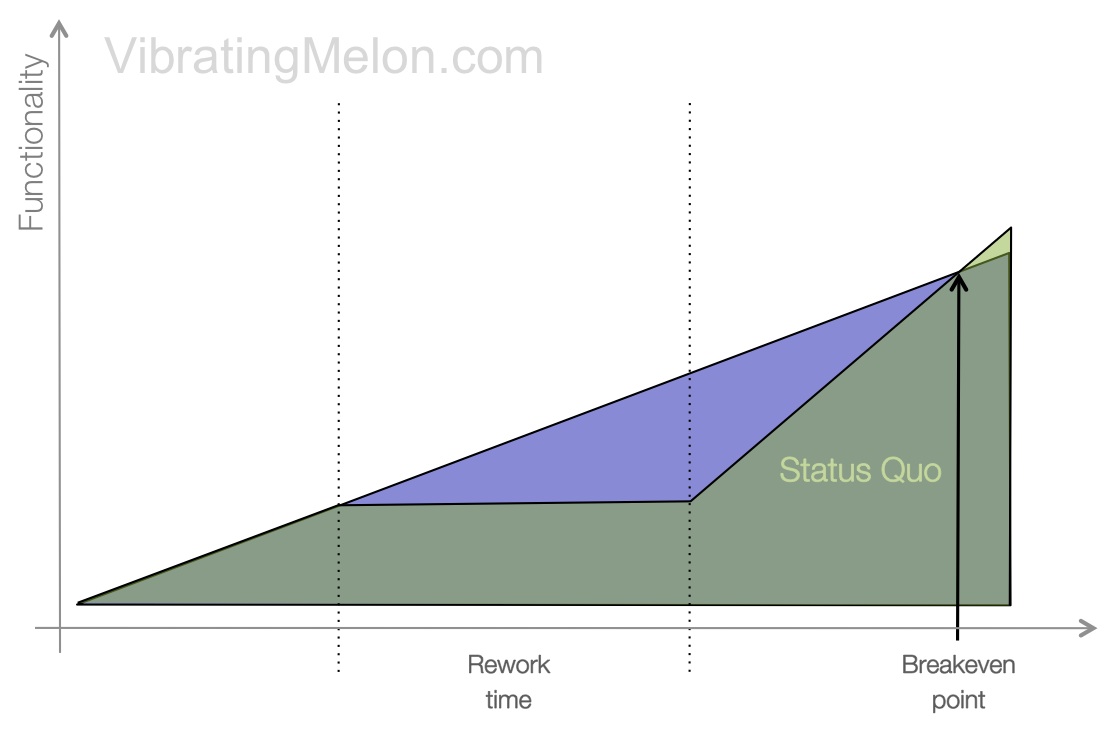

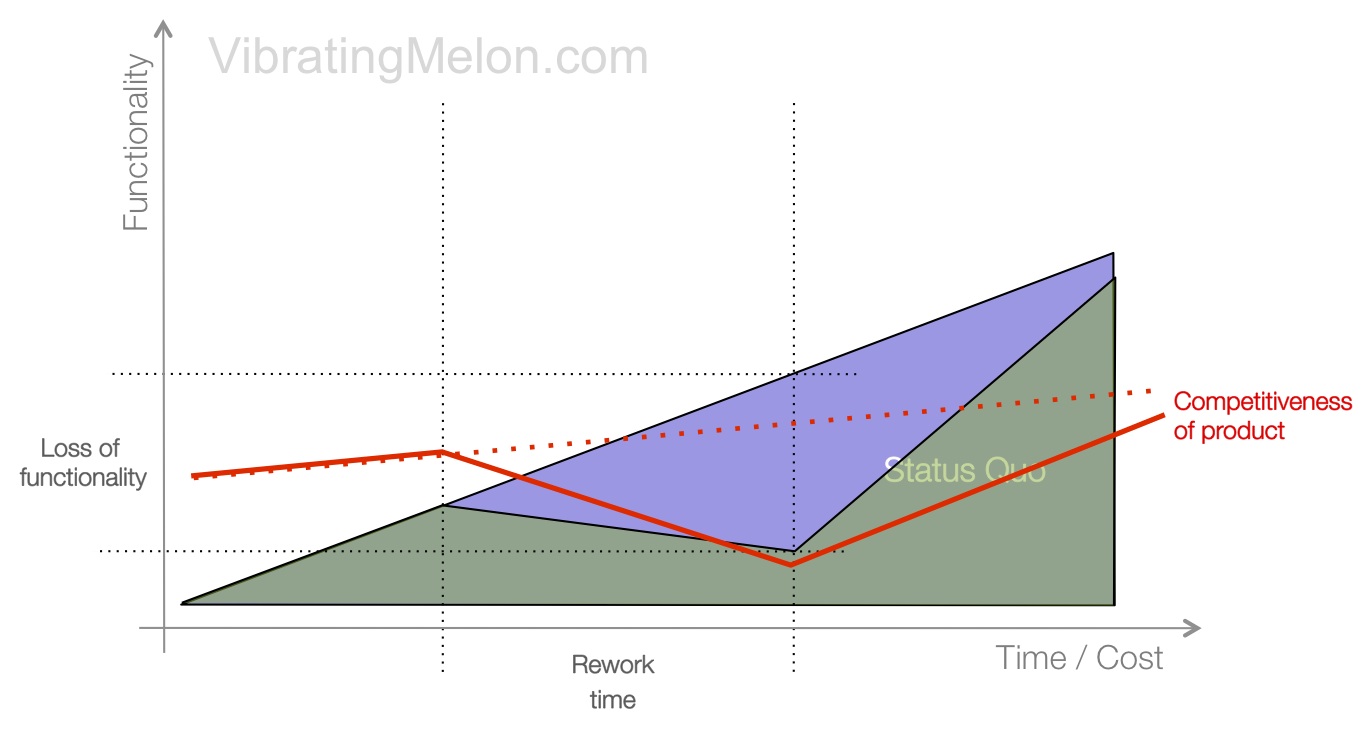

Firstly, here’s the status quo:

The more time and money you spend on an existing product, generally speaking, the more functionality you get.

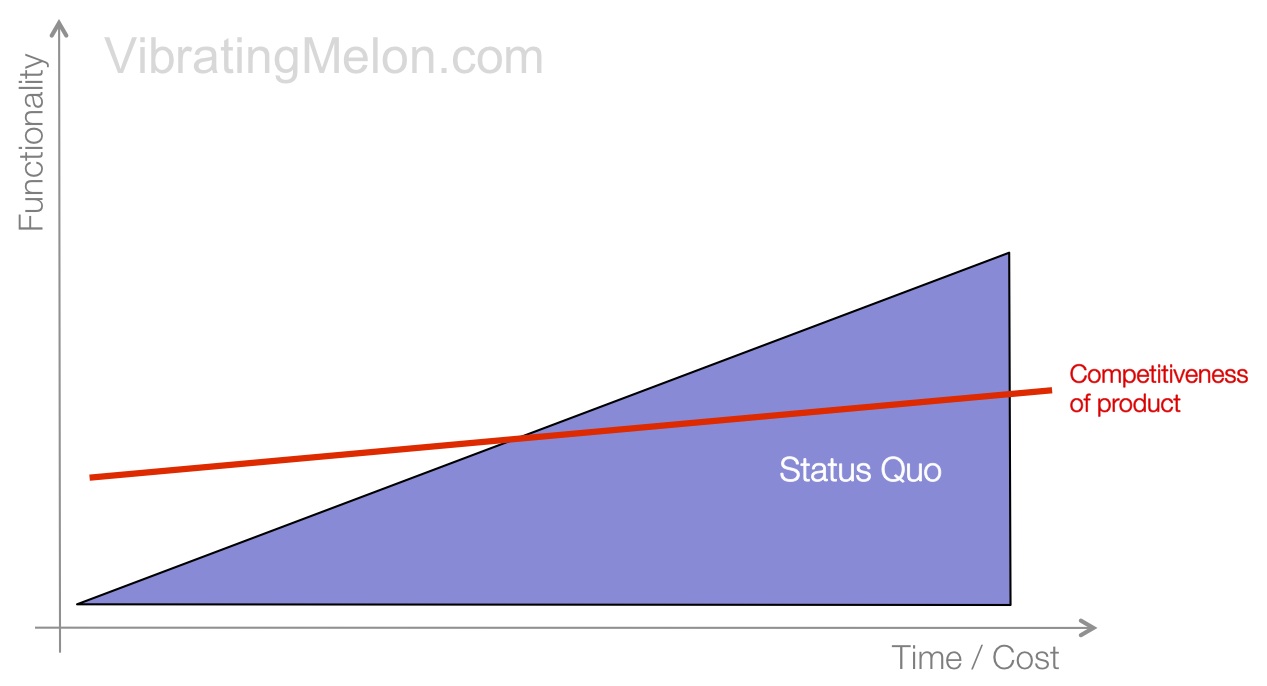

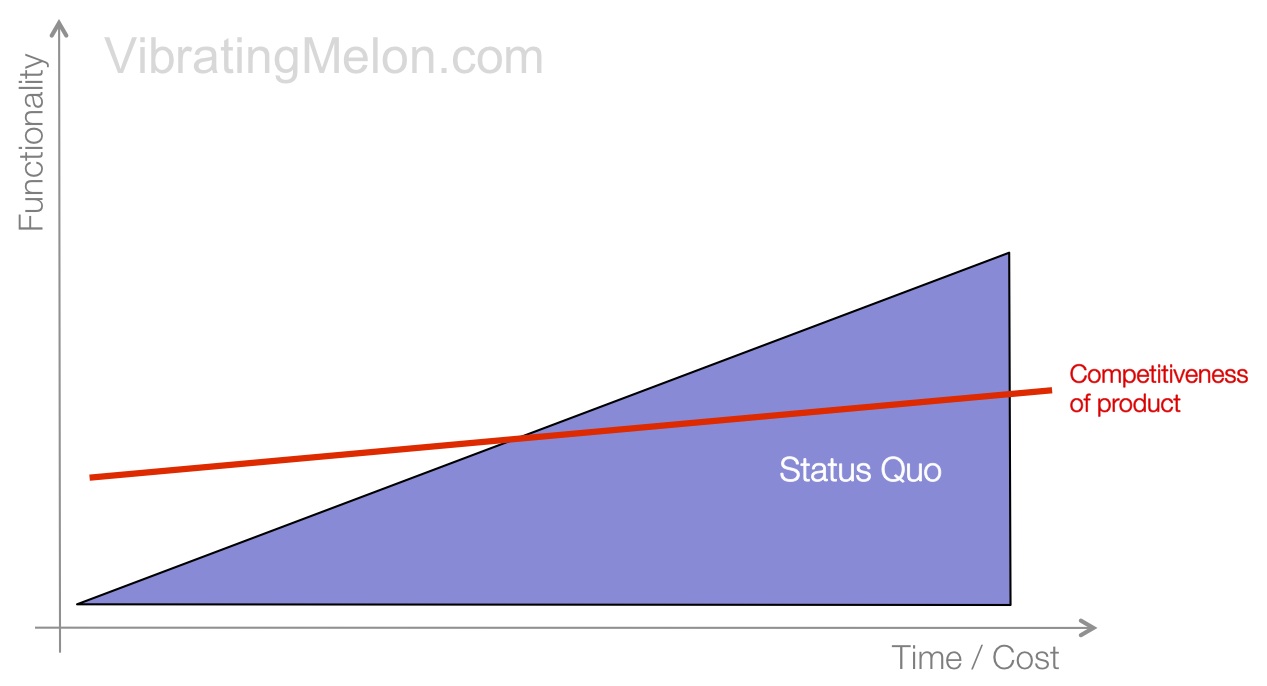

Let’s then overlay the competitiveness of the product in its target market. Of course, what you want to achieve is a continual improvement in the competitiveness of the product. Competitiveness doesn’t increase as fast as functionality. Your competitors don’t stand still so, typically, the best you can hope for is to increase competitiveness gradually over time – even staying flat is a big challenge.

Of course, this chart is idealized – functionality will increase in a more lumpy way as you release major new chunks of functionality and competitiveness will move up and down against your market. However, they demonstrate the point.

Note: the Y axis on all these charts represents “functionality” which, for the purposes of this discussion, can be considered a blend of features and quality. The distinction between the two is not really important in this analysis since you always want to be advancing on one or both and they both impact overall product competitiveness.

Let’s Rewrite!

Cue your software architect. He’s just been reading about this great new application framework and language called Ban.an.as – it’s so much better than the way you’ve been doing things previously. Hell, Ban.an.as has built-in back-hashed inline quadroople integration comprehensions. Plus, all the cool kids are using it.

If you’re a non-technical manager or executive, this can become quickly overwhelming and hard to argue against. They’re the experts, right, so they must know what they’re talking about.

[By the way, if you’re a non-engineer, there’s no such thing as “back-hashed inline quadroople integration comprehensions” – I made that up. Sorry.

A little secret here: there really haven’t been any new programming paradigms invented since the 1970s. Software folks are generally in their 20s and didn’t see them the first time around – they just get “re-discovered” and given new names. Sssh – don’t tell anyone.]

Just to be clear – I’m not saying that new languages, frameworks and technologies can’t create significant improvements in developer productivity – they can. But, their introduction into an existing product has a big price, as we’ll see.

Lastly, lest I alienate or offend my fellow geeks, I have been that very software architect advocating for the rewrite. I learned this the hard way.

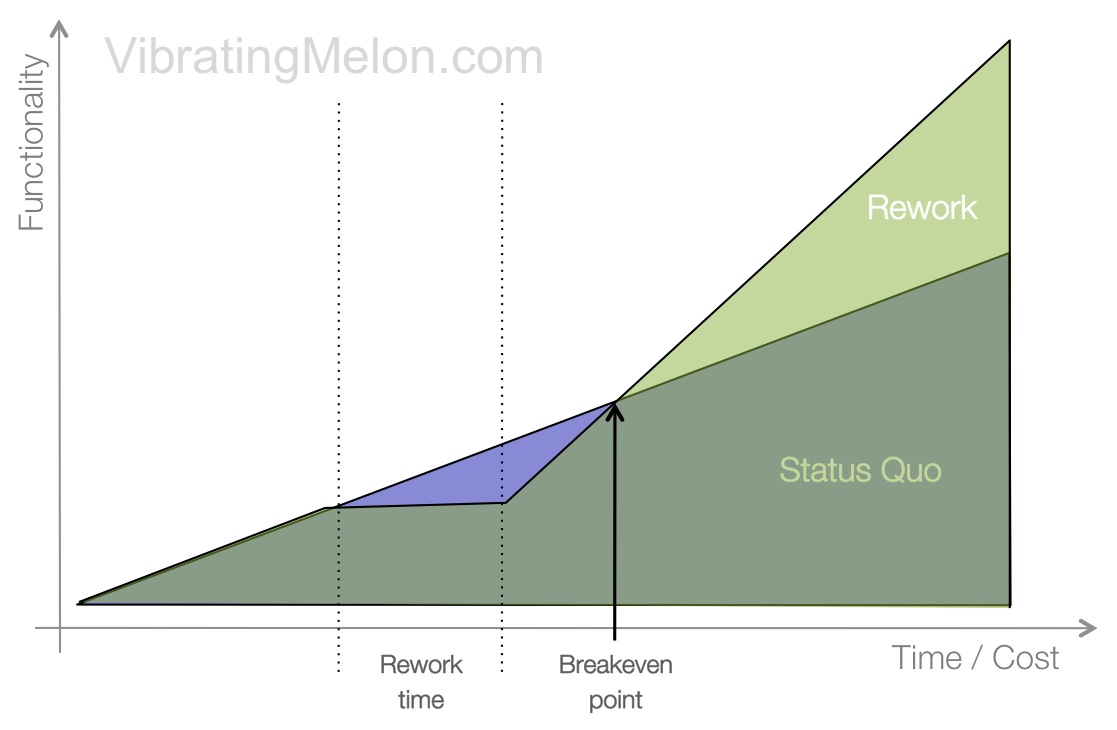

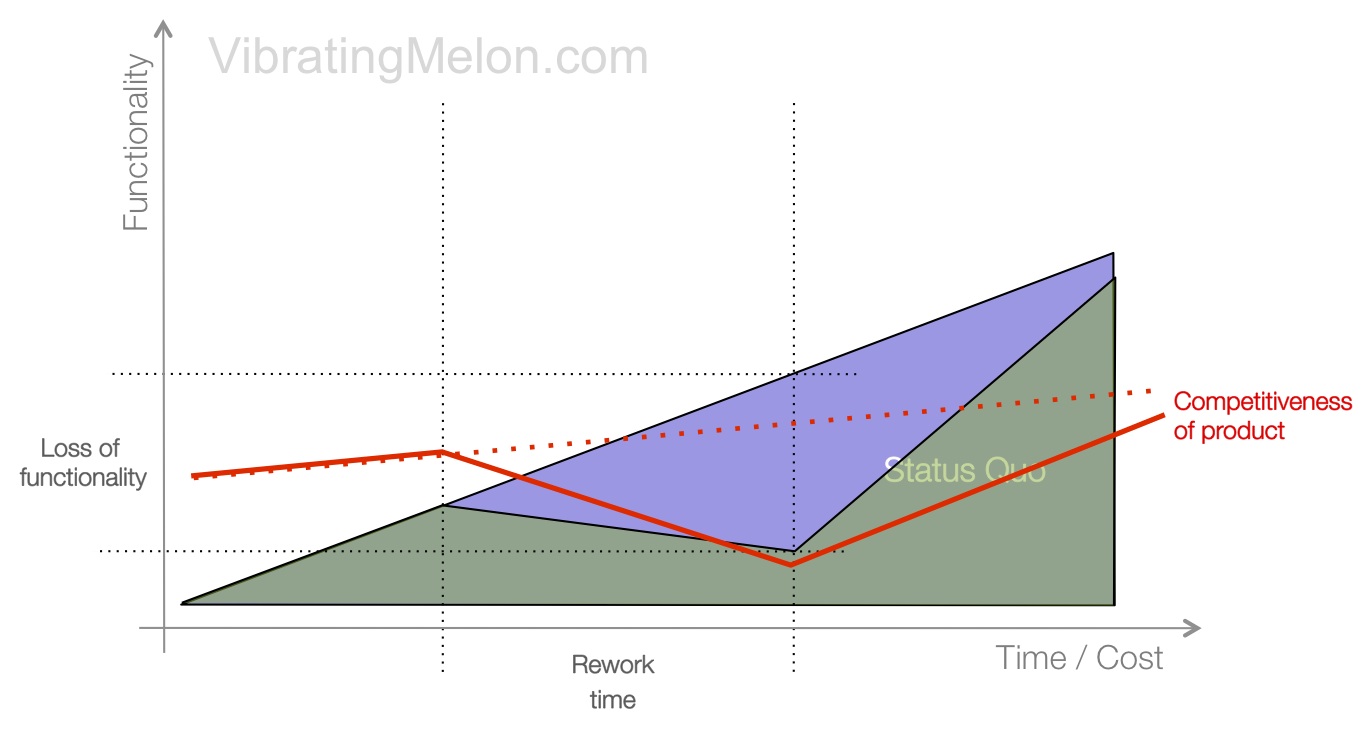

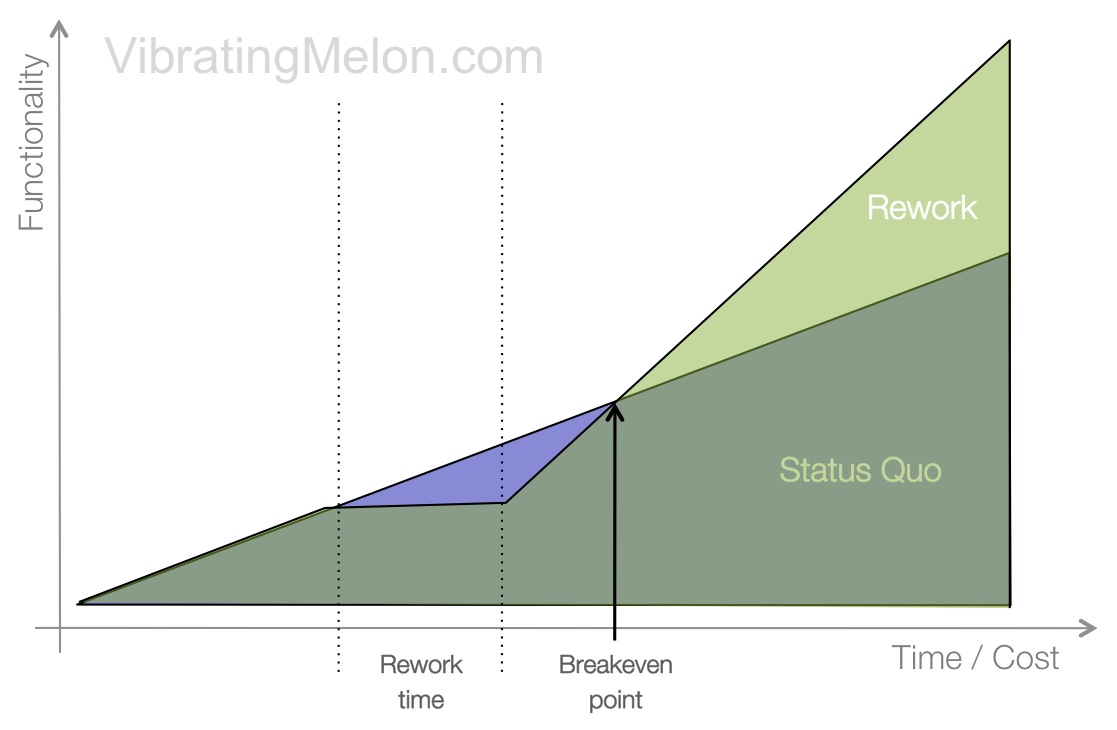

So, this is how the rewrite is supposed to work in theory:

Let’s break this down:

The rework is expected to take some amount of time. During this time, functionality won’t increase because developers are focusing on rebuilding the foundations.

In the graph, that’s the blue area you can see peeking through. That blue area represents the cost of the rewrite.

But, once the rewrite is done, the idea is that progress will be massively greater than it was previously because the new technology used is inherently better than the old. Developers will be more productive with the new. There’s no need to work with the old spaghetti code – instead, there’s a beautiful new architecture free of all the baggage that came before.

The critical point in time is the break-even point – this is the point at which the functionality of your product starts to exceed where you would have been had you stuck with the original implementation and continued working on it.

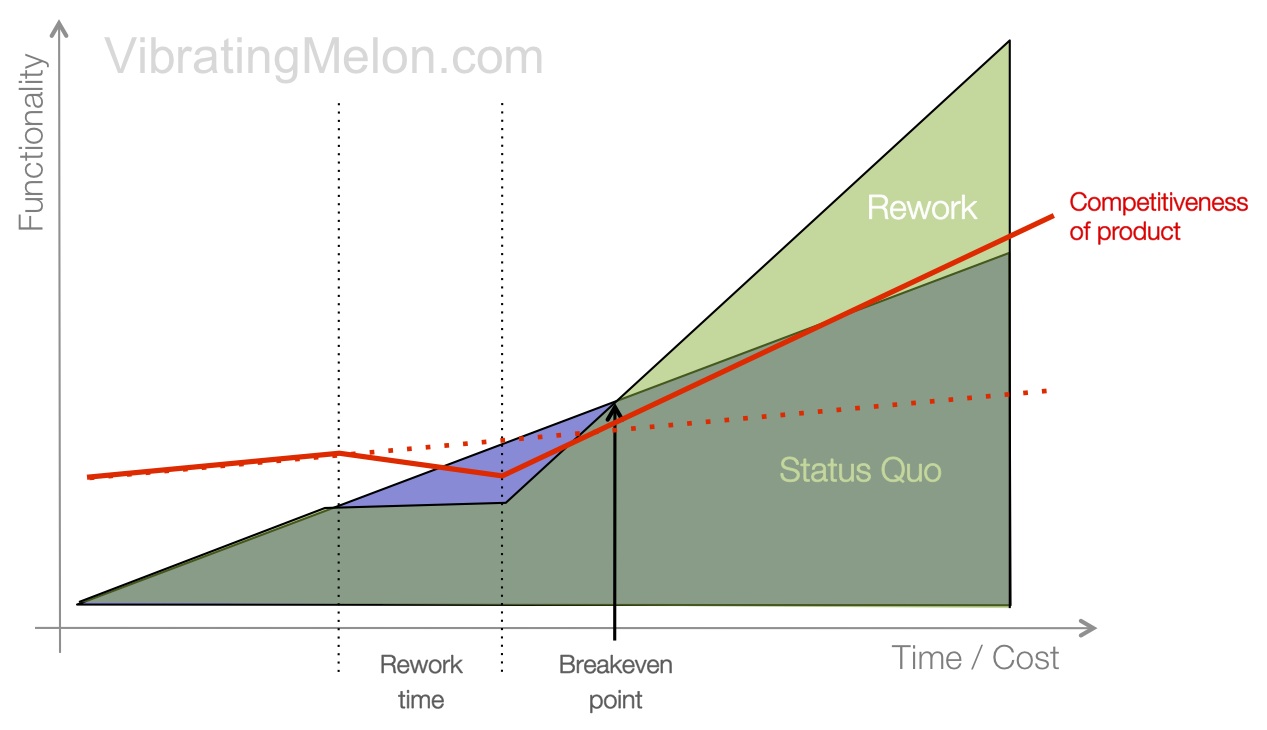

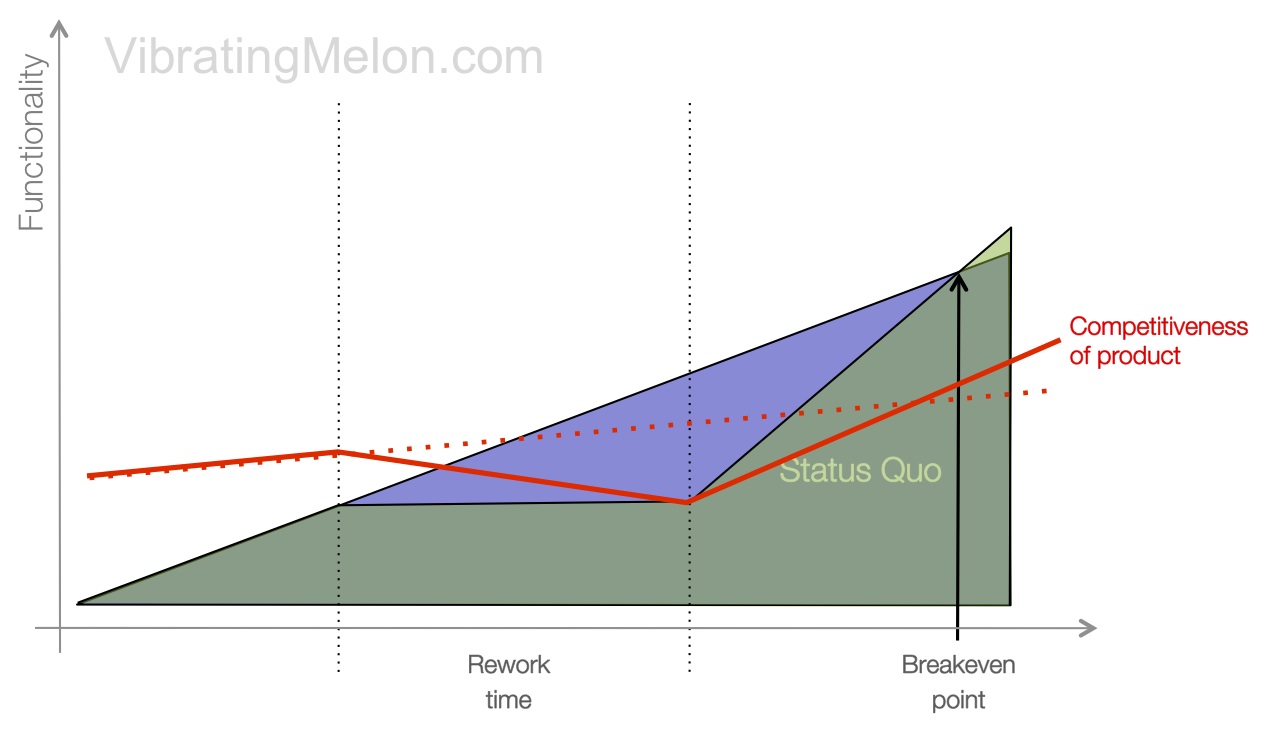

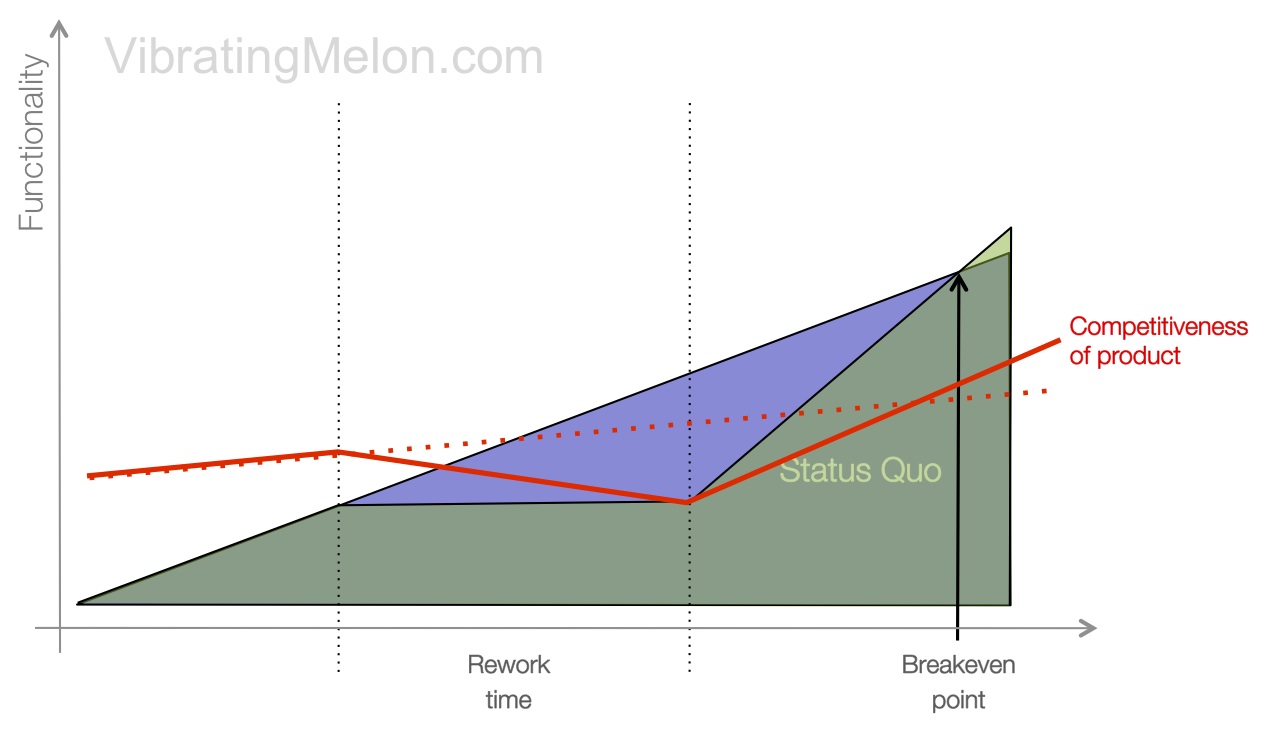

During the rewrite period, the competitiveness of your product will typically decline since your competitors are not standing still and you can’t develop new functionality.

However, the claim typically made by the rewrite advocates is that the rework will be relatively easy, take a relatively short period of time and hence achieve break-even quickly, after which it’s non-stop to the moon…

What tends to go wrong – Part 1

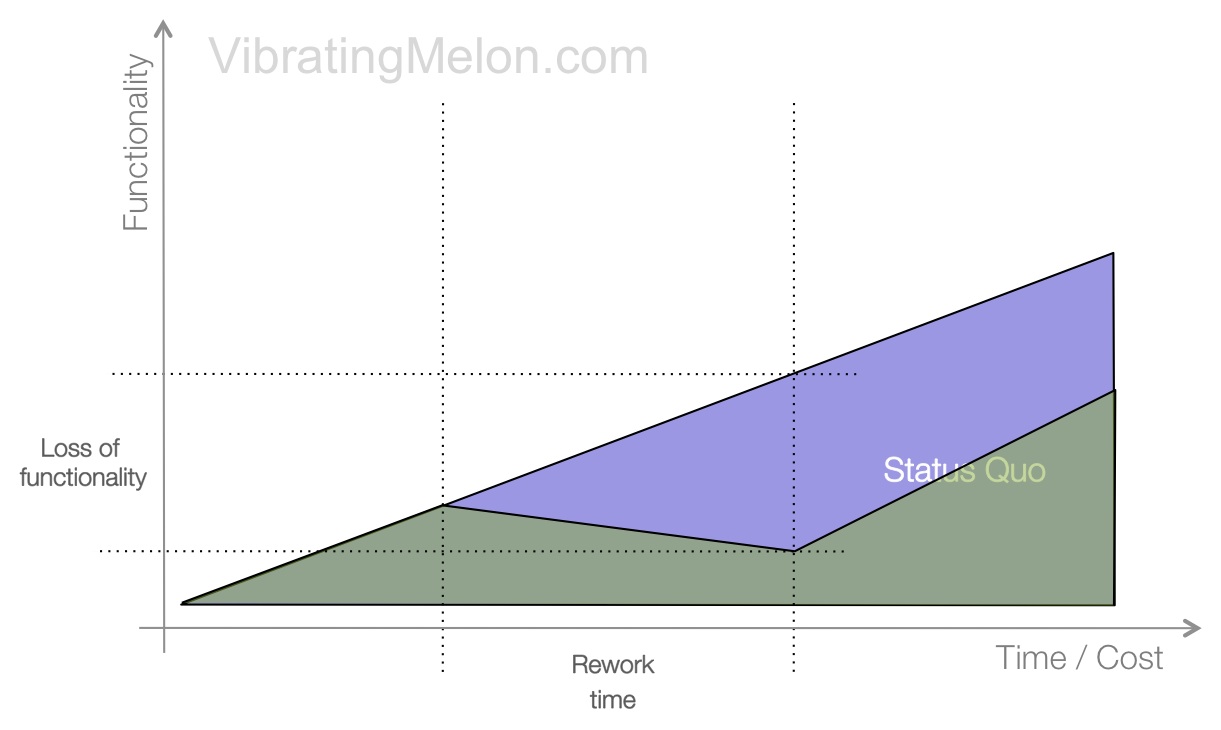

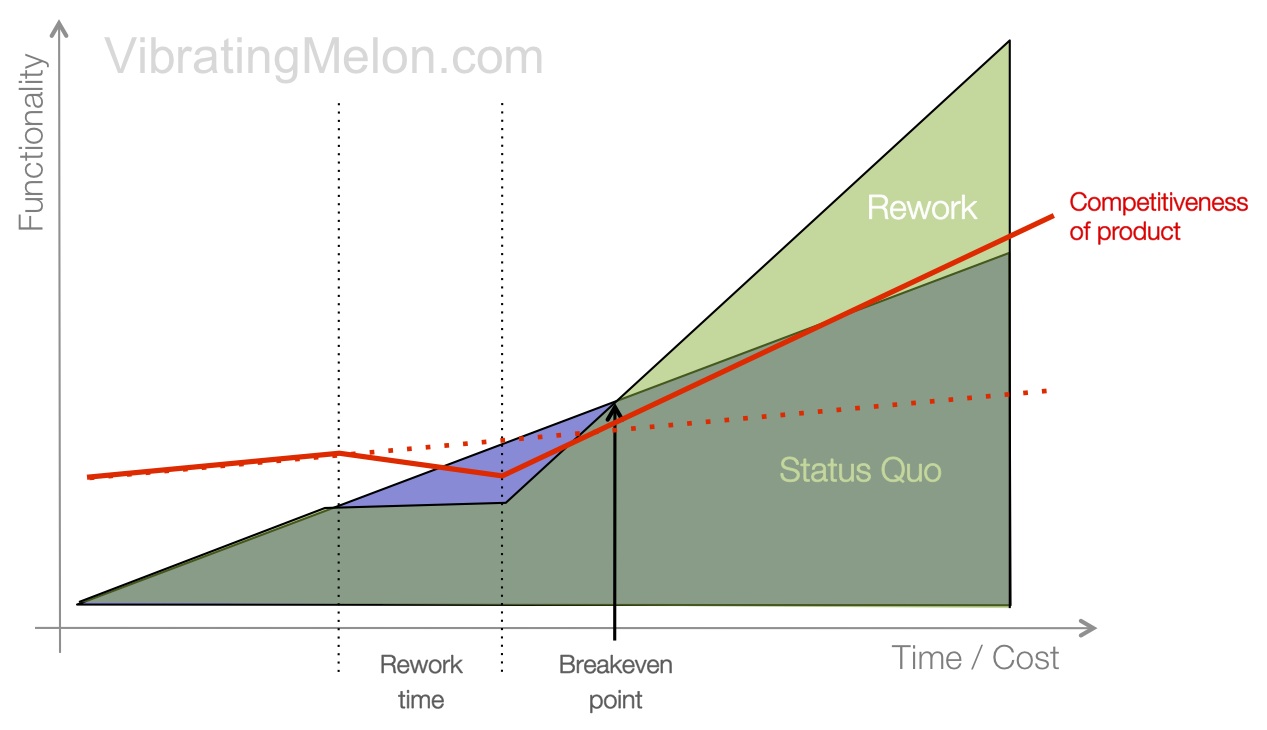

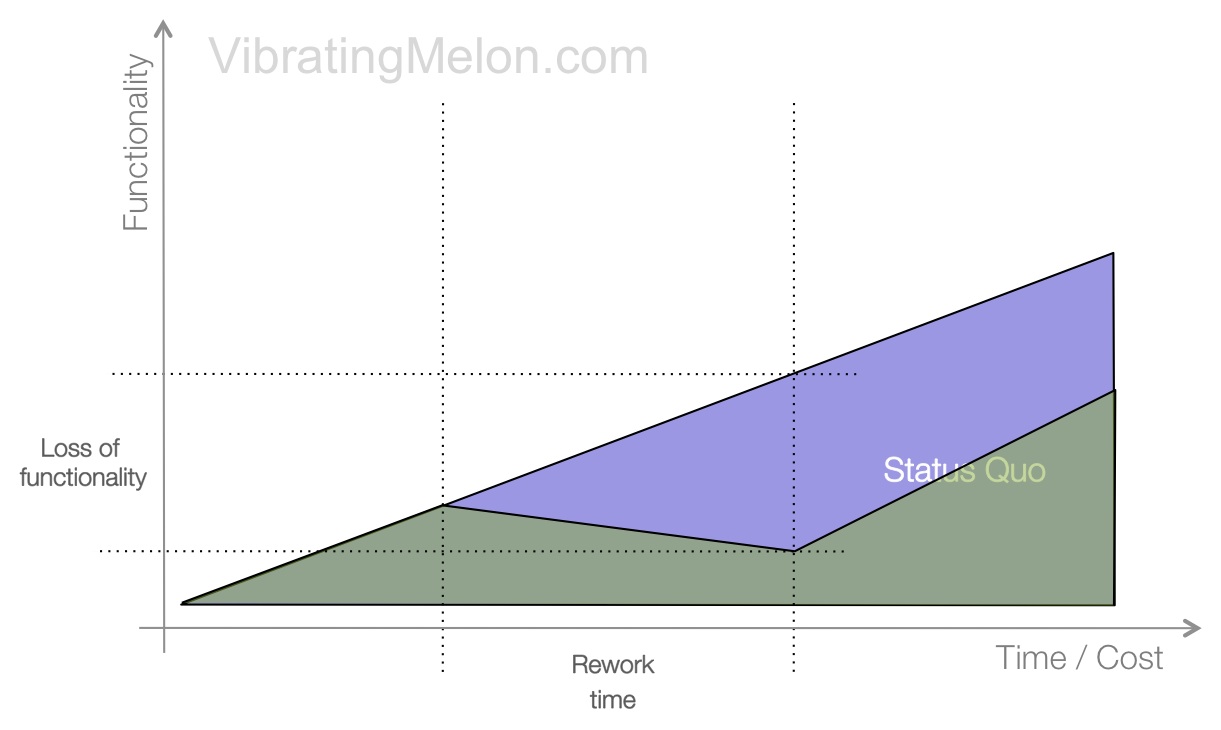

So, what tends to go wrong? The most common problem is arguably the most common problem in software development generally; the rewrite takes significantly longer that expected.

There’s a discussion of why this tends to happen below but, for now, trust me that this often happens – if you’ve had any experience with software development at all, it’s highly likely that you’ve seen this happen too.

The result is that the cost of your rewrite is significantly larger than originally claimed. (The blue area showing through on the chart is now much larger.) This means that the break-even point is also significantly pushed back in time.

The knock-on effect is that the competitiveness of your product drops for a much longer period of time.

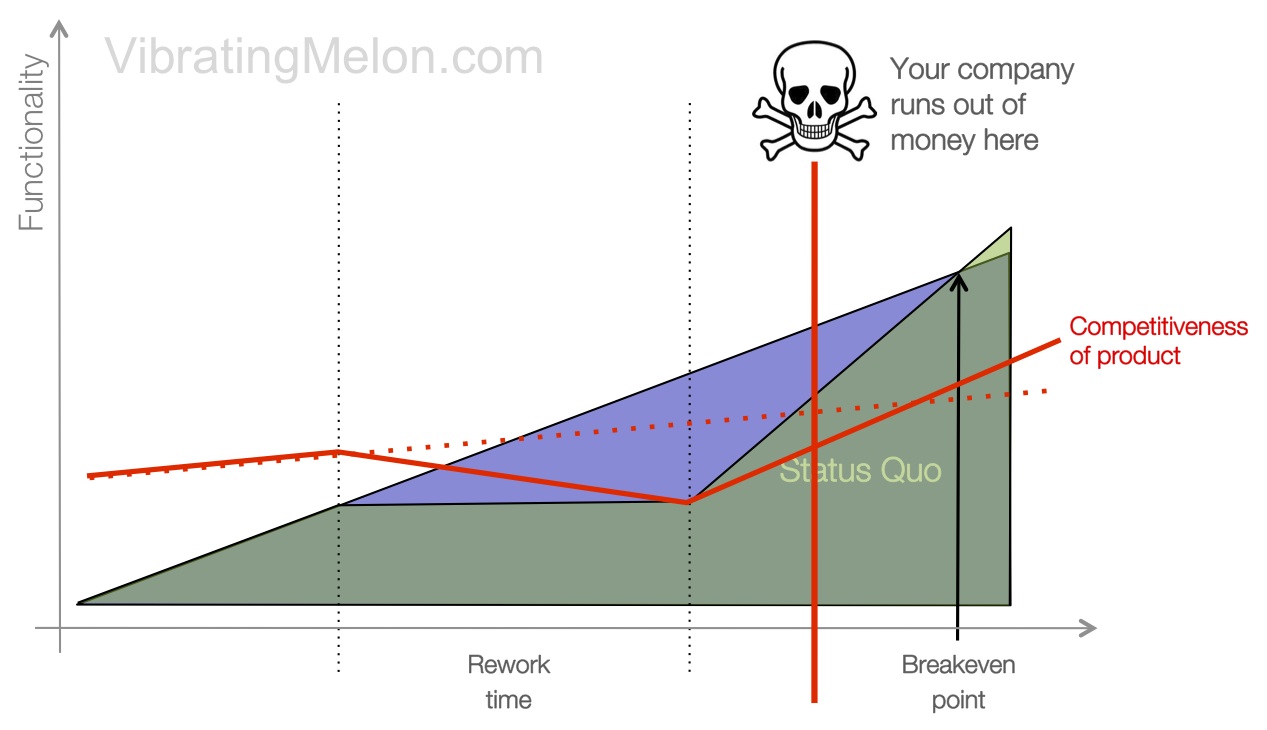

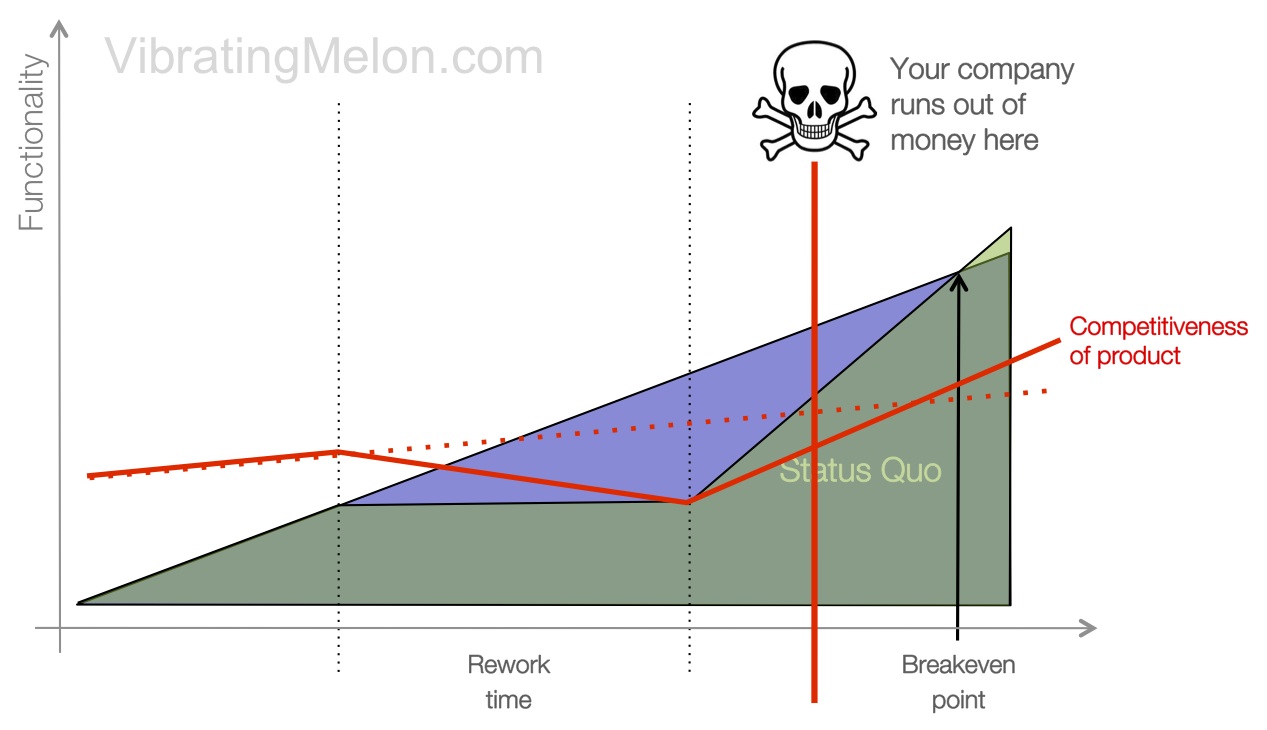

If you are a big company, you can probably (hopefully) absorb the pushed out break-even point – maybe other products provide revenue, maybe good channel relationships continue to deliver sales of your product even though it’s falling versus the competition or maybe you’ve simply got lots of cash reserves. Joel Spolsky references several big company rewrites that failed but did not kill the company.

However, if you are a startup or smaller company (or even a less fortunate bigger company), this can be – some might argue, is very likely to be – fatal.

What tends to go wrong – Part 2

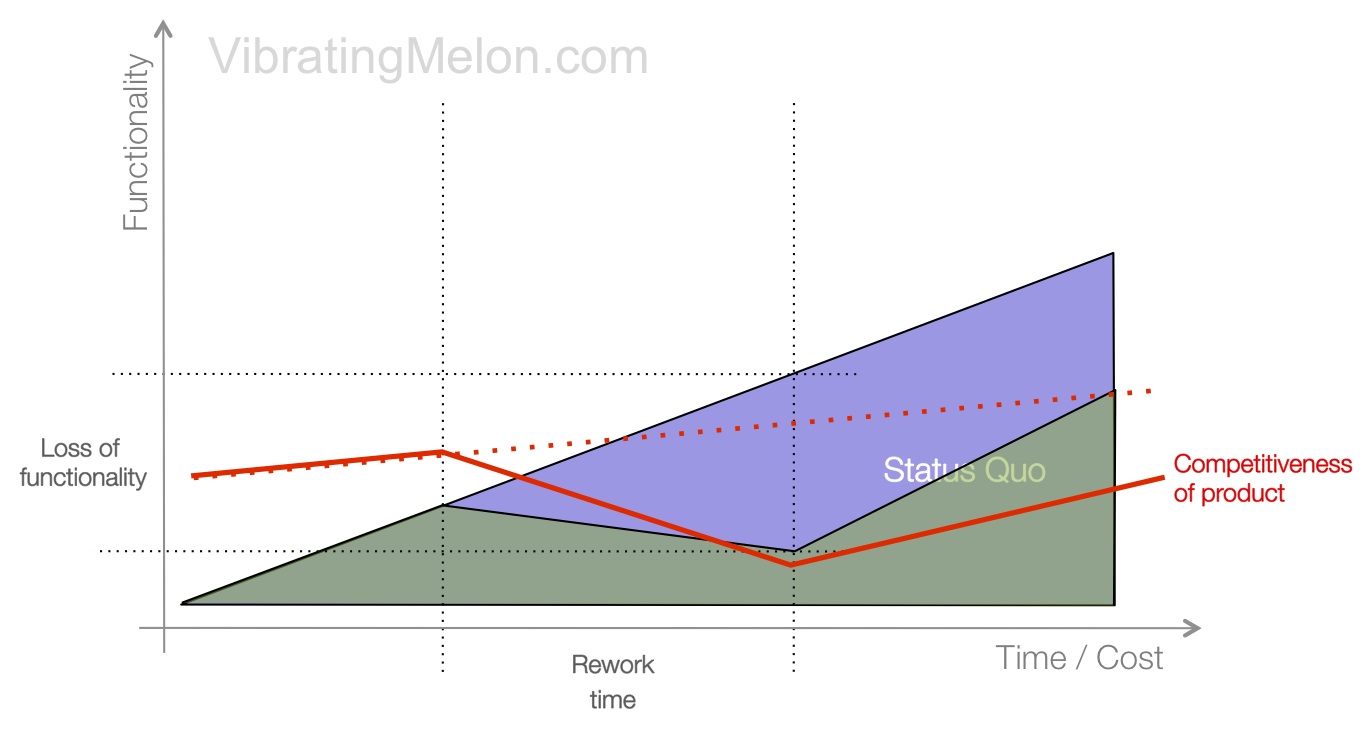

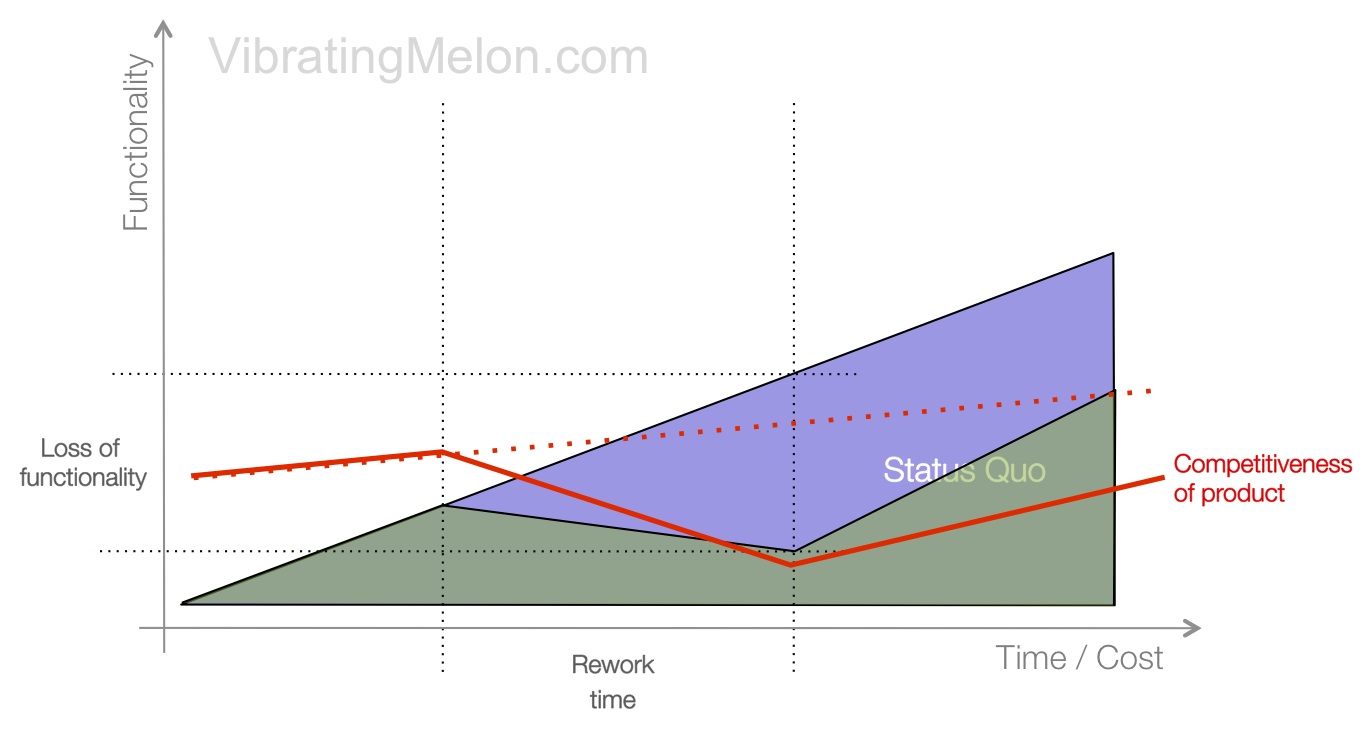

It gets worse.

Not only does the rewrite often take longer than expected but functionality is not even flat at the end of it – functionality is actually lost as a side-effect of the rewrite.

That’s because all of those small but important features, tweaks and bug-fixes that were in the original product don’t get reimplemented during the rewrite. (These are the “hairs” that Joel discusses.)

Remember that the main focus of the rewrite in the developer/architect’s mind is generally to build a better architecture and these small features don’t seem important and mess up this beautiful new architecture.

Plus, however good the developers are, they will introduce new bugs that will only get exposed and fixed through usage.

The net result is that the product after the rewrite is, in the eyes of the end-user and the market, worse than the product before the rewrite, even if it’s better in the eyes of the developer.

This further pushes out the break-even point in terms of functionality and competitiveness of your product will take a long time to recover. If you listen carefully, you can probably hear your competitors wetting themselves laughing at your folly.

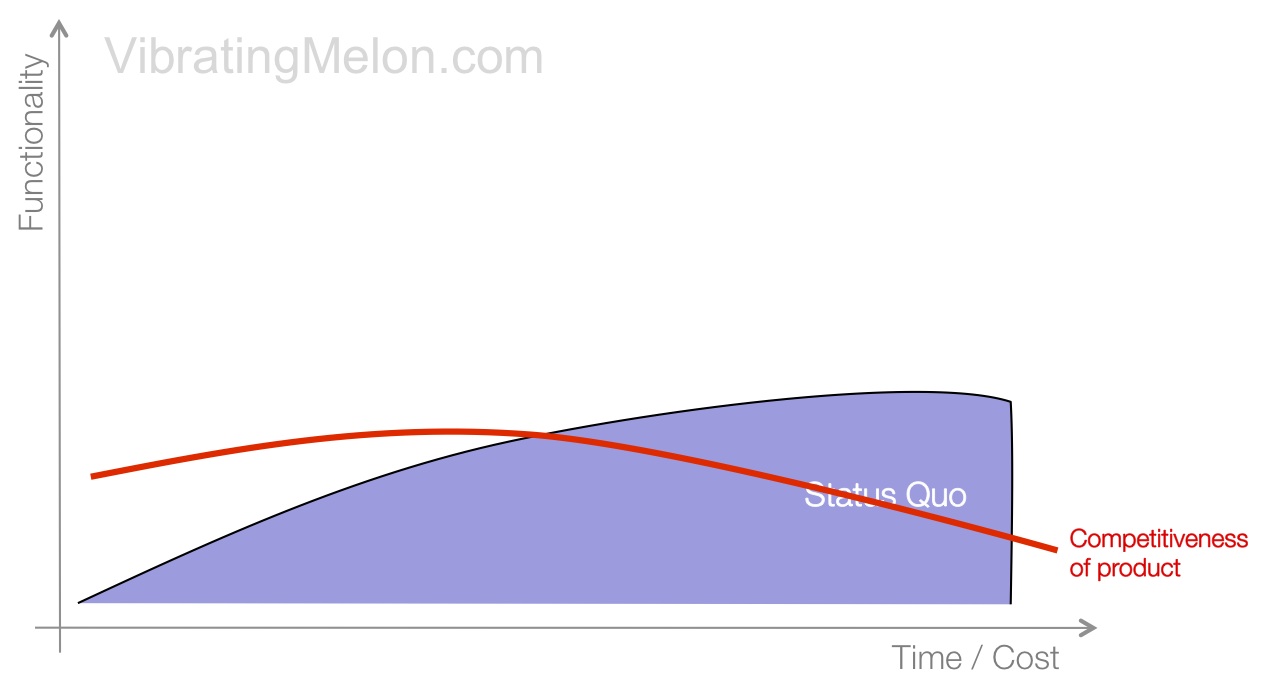

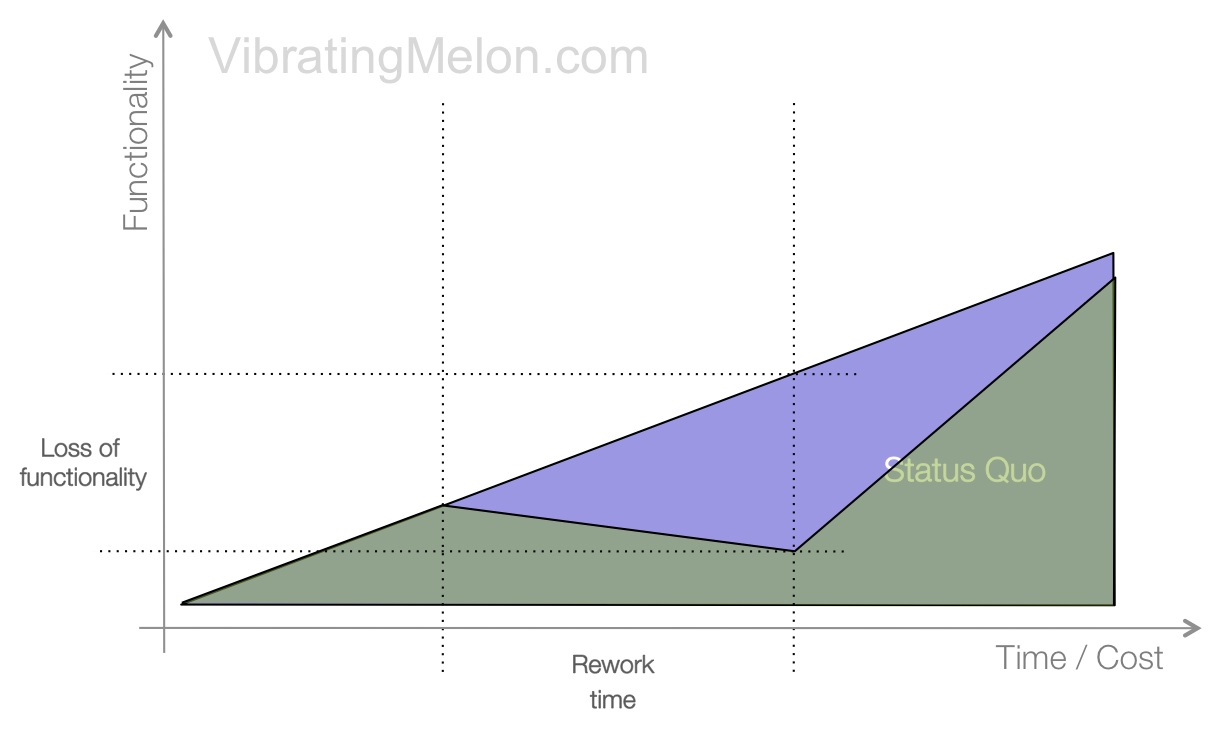

What tends to go wrong – Part 3

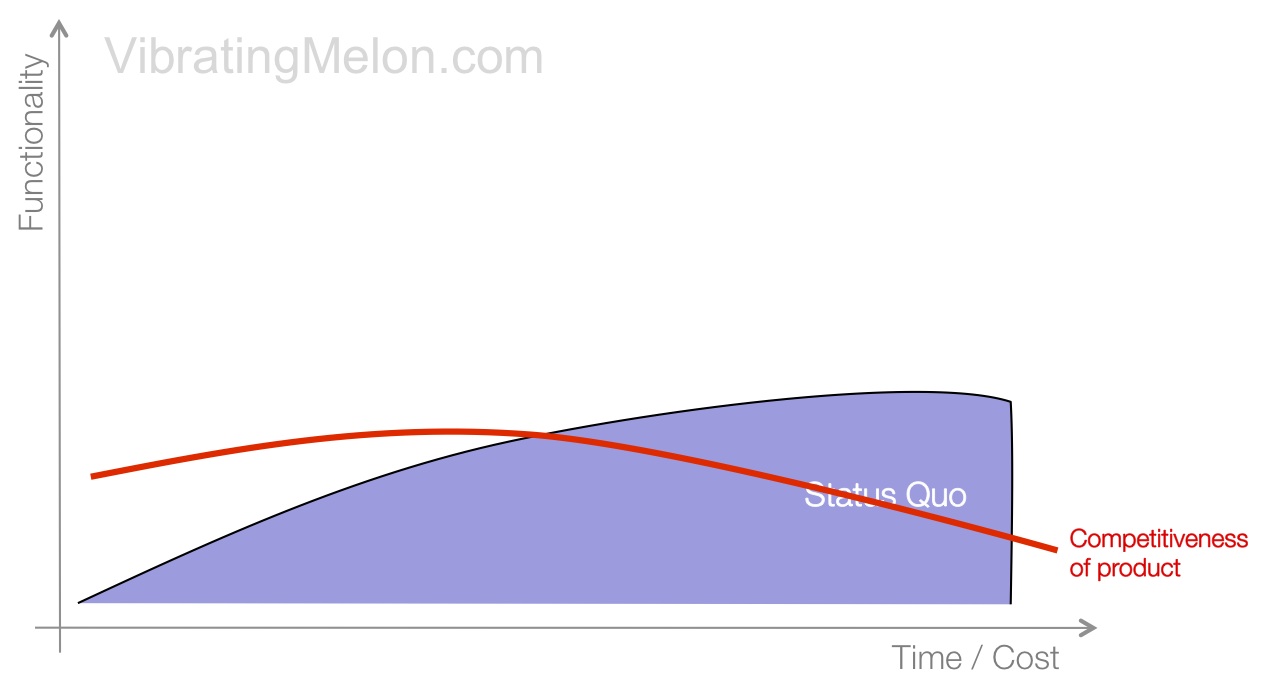

Oh dear.

The nail in the coffin is that, not only does the rewrite take longer than expected, and functionality get lost in the process, but the benefits of the new technology/language/framework turn out to not be nearly as great as claimed. Meanwhile, if you’d stuck with the status quo, you would have got more productive due to normal learning effects – i.e. don’t forget that while your team is climbing the learning curve with the new technology, you would have been getting better with the old one anyway.

Any one of the 3 problems above is potentially fatal but all 3 together is definitely so. Competitiveness of your product will likely never recover.

Why does this happen?

So, the above sections discuss what can go wrong. It might help to understand why this happens.

In Joel’s seminal post cited above, he makes the point, “It’s harder to read code than to write it.”

Very true – it’s also more fun to write new code than learn someone else’s code.

Developers like to feel smart and, intellectually, learning someone else’s code can seem like a lose-lose scenario to a lot of developers – if the code is bad, it’s painful to learn and you’re going to have to fix it. On the other hand, if it’s better than you would have written, it’s going to make you feel stupid.

Also, bear in mind that the fundamental motivation of most (not all) engineers is to Learn New Stuff ™. They are always going to gravitate towards new things over old problems they feel they’ve already solved. Again, I’m not faulting developers for this – it doesn’t make them bad people but, if you’re a non-developer, it’s critical you understand this motivation.

Put it this way: would you rather be the guy that architected the Golden Gate Bridge or one of the guys hanging off it on a rope, scraping off rust and repainting it?

Another problem is that when you rewrite, you typically make rapid progress early on which gives false validation to the decision to rewrite. It makes you feel smart – what a beautiful cathedral I’m building.

That’s because you’re writing code in a vacuum. No one is using the code yet; certainly no actual users. But, as you start to reach launch date, all those small features, tweaks and bug-fixes – all that learning that was encapsulated in your old product – starts to become conspicuous by its absence.

The bottom-line is that, when the case to rewrite is being made, it is not comparing apples to apples – it is comparing theoretical benefits with actual benefits. The actual benefit in question being, of course, that you have an existing product that works.

Comparing the pros and cons of writing a new application in language A versus B is not the same as comparing an application already written in language A versus rewriting that application in language B. Why? because any productivity gains of one language over the other are typically massively overridden by the loss of all the domain knowledge, testing and fixes encapsulated in the existing product.

Should you EVER rewrite?

So, are there ever circumstances when you should rewrite? My answer here is an emphatic“maybe”.

Let’s consider some of the possible situations:

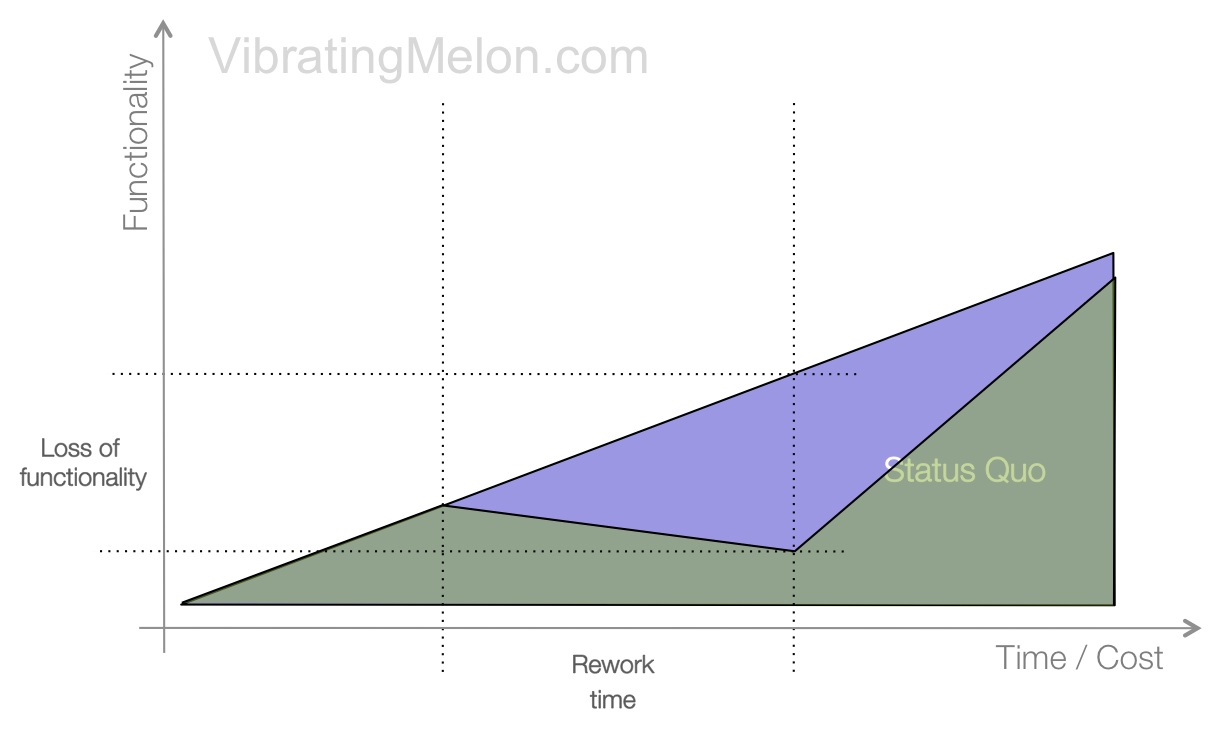

1. An irretrievably sick code-base

The symptom here is that it takes exponentially longer to add each new feature – per the chart above. Another symptom is that defect reports continue to come in as fast, or faster than you can fix them – you’re treading water.

However, an irretrievably sick development team is a much more likely culprit than an irretrievably sick product.

Don’t let developers convince you that they can’t maintain a codebase – what they most often mean is that they don’t want to maintain a codebase.

2. The developers who wrote the code are not available

If you buy/find/inherit a codebase, make sure you have access to as many of the developers who wrote it as possible, for as long as possible. If you didn’t…doh!

Again, don’t fall into the trap – developers will always prefer to write new code of their own than learn how the existing code works.

3. A genuine change to a new problem domain

Sometimes, some of the fundamental assumptions and technology choices may no longer be valid for the problem domain that your product is addressing. But, then I’d argue, you’re really talking about building a new product rather than rewriting an existing one.

4. Fundamentally incorrect or limiting technology choices

In some cases, the team that originally built the product may have made some poor choices in the technologies to use or the approaches to take. They may simply not map well to the problem domain of your product. However, in my experience, this is true much less often than developers claim it’s true.

Also, there are points where you start to hit fundamental scalability limits of certain technologies. However, keep in mind that if you’re hitting those scalability limits, someone else probably has too before you, and solutions to the problem exist.

So, if you are even entertaining the suggestion of a rewrite, make sure you get the developers to give you a specific cost-benefit analysis of the rewrite. Show them the charts above and get them to tell you why these problems won’t occur in this case, even though they occur in almost every case.

If and when you are convinced that this is one of those rare situations where a rewrite is the right approach, you need to make it surgical: where will you make the incision? How deep do you cut? What are the risks? What are the potential side-effects? How can I make sure no functionality is lost? How can it be done incrementally?

Divide the rewrite into a series of smaller changes, during which functionality absolutely cannot be lost. Rewrite parts of the system at a time with a well defined, understood and testable interface between old and new.

Better still, don’t rewrite.

Good luck.

Agree, disagree? Please leave a comment.